Cluster computers consist of a set of computers connected together so that many things but a single system view. Unlike networked computers, cluster computers have each node set to perform the same task, controlled and scheduled by software.

Cluster components are usually connected to one another via a Local Arena Network (LAN) with each computer node used as a server running independently of an operating system. In most cases all nodes use the same hardware and the same operating system, although in some setups using the Open Source Application Cluster (OSCAR), different operating systems can be used on each computer and different harware.

Usually cluster computers are used to increase the performance and availability of a single computer, while usually being much more cost-effective than a single computer of comparable speed or availability. Cluster computers emerged from the convergence of a number of computing trends including the availability of low-cost microprocessors, high-speed networks, and software for high-performance distributed computing.

They have a wide range of deployments and deployments, from small business groups with multiple nodes to some of the world’s fastest supercomputers such as the IBM Sequoia. The applications that can be done however, remain limited, because the software must be purpose-built per task. It is then not possible to use cluster computers for casual computing tasks.

As for the advantages of the cluster itself is mainly designed with performance in mind, but installation is based on many other factors fault tolerance, the ability for the system to continue working with damaged nodes also allows for simple scalability, and in high performance situations, low frequency of maintenance routines, source consolidation power clarification is needed, and management is centralized.

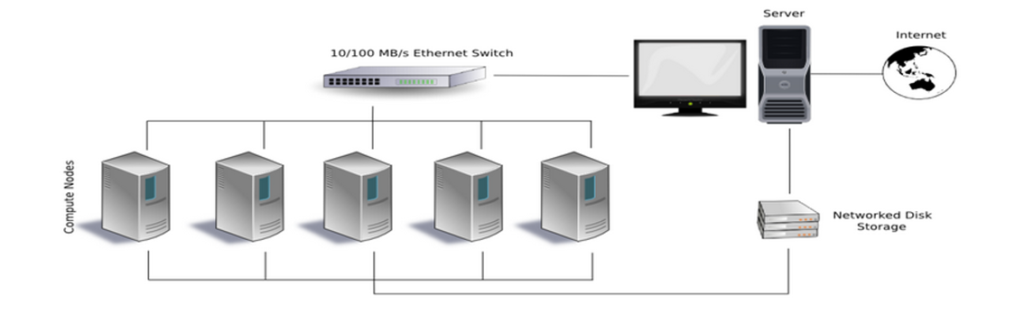

We can see the cluster design in Figure 1.0

In the Beowulf system, the application program never sees the compute nodes also called slave computers but only interacts with the “Master” which is a specific computer handling scheduling and management. In this implementation it has two network interfaces, one communicating with the private Beowulf network, the other for the organization’s general purpose network. computers usually have their own version of the same operating system, and local memory and hard disk space. However, private networks may also have large and shared file servers that store persistent global data, accessed by many users as needed.

As computing power increases with each generation of game consoles, new uses have emerged where they are repurposed to High Performance Computing (HPC) clusters. Some examples of game console clusters are the Sony PlayStation cluster and the Microsoft Xbox cluster. Another example of a consumer gaming product is the Nvidia Tesla Supercomputer Personal workstation, which uses multiple graphics processor accelerator chips. If you interested playoing PUBG or any android game on computer, maybe you need to read this article best android emulator for pc.

Apart from game consoles, a high-end graphics card can also be used as a substitute. Using a graphics card (GPU or rather they) to perform calculations for grid computing is much more economical than using a CPU, although it is less precise. However, when using multiple precision values, they become just as precise to work with as a CPU, and are still much cheaper (purchase cost).

The computer cluster runs on separate physical computers with the same operating system. With the advent of virtualization, cluster nodes can run on separate physical computers with different operating systems painted on top with virtual overlays to look similar. Clarification is required this cluster can also be virtualized on a variety of maintenance configurations in progress. An example of an implementation is Xen as a virtualization manager with Linux-HA.